End-to-end Deep Learning Optimization of a Compressive Spectral Video Camera

Kareth LEON

Postdoc, Université de Toulouse, INP‑IRIT‑ENSEEIHT

April 6th, 2023

Abstract

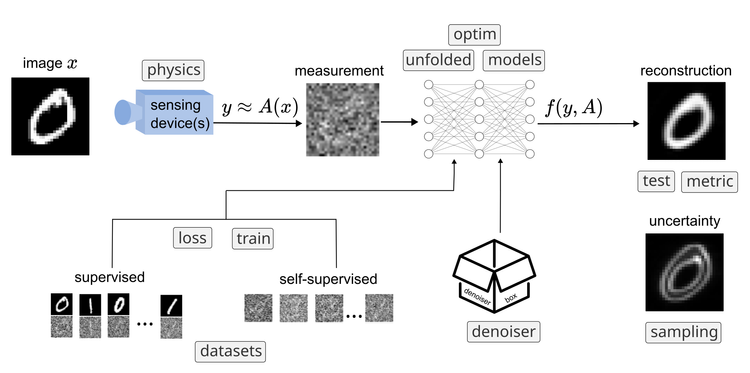

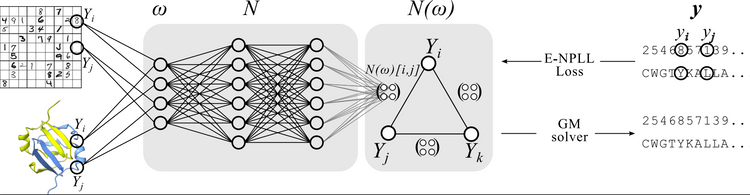

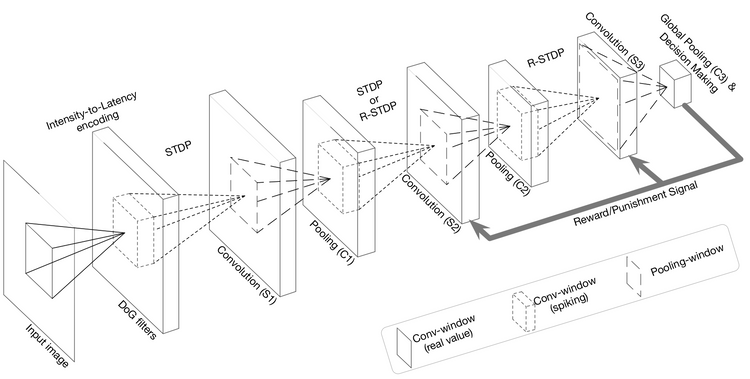

Spectral videos (which include multispectral and hyperspectral dynamic scenes) contain spatial information across several wavelengths at different instants of time and are relevant in different scenarios such as defense and security, agriculture, medicine, among others. The spectral video acquisition and processing are nowadays still challenging given the curse of dimensionality of the high-dimensional data. To this end, compressive spectral video sensing (CSVS) provides a framework for exploiting high correlated data and reducing dimensionality through the encoding and compressing of the spatial-spectral information using a data-independent coded aperture (CA). However, up to date, there is no prior work concerning the joint design of these stages in CSVS systems, where the spectral information across time is valuable. In this talk, I will present an end-to-end (E2E) deep learning approach to jointly design the CA and the reconstruction method under the CSVS framework. The proposed formulation takes advantage of denoising state-of-the-art networks to provide two-stage learning for exploiting the spatial-spectral and spatial-temporal correlations. Simulations on two collections of real multispectral videos show the advantages of designing the E2E network, leading to 1dB and 5dB improvements in PSNR compared to deep-learning and traditionally iterative-based approaches, respectively.

Member discussion